Cheng Zhang1, Cengiz Öztireli2, Stephan Mandt3, Giampiero Salvi4

1Microsoft Research, Cambridge, UK

2Disney Research, Zurich, Switzerland

3University of California, Irvine, Los Angeles, USA

4KTH Royal Institute of Technology, Stockholm, Sweden

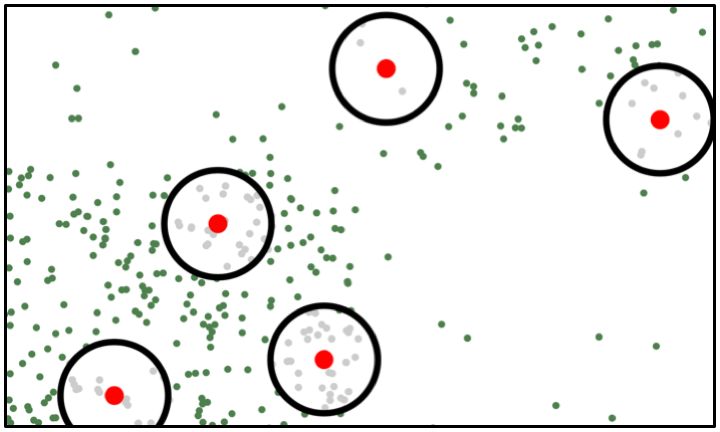

The convergence speed of stochastic gradient descent (SGD) can be improved by actively selecting mini-batches. We explore sampling schemes where similar data points are less likely to be selected in the same mini-batch. In particular, we prove that such repulsive sampling schemes lower the variance of the gradient estimator. This generalizes recent work on using Determinantal Point Processes (DPPs) for mini-batch diversification (Zhang et al., 2017) to the broader class of repulsive point processes. We first show that the phenomenon of variance reduction by diversified sampling generalizes in particular to non-stationary point processes. We then show that other point processes may be computationally much more efficient than DPPs. In particular, we propose and investigate Poisson Disk sampling—frequently encountered in the computer graphics community—for this task. We show empirically that our approach improves over standard SGD both in terms of convergence speed as well as final model performance.

Links:

PDF